How to Optimize Content for LLMs?

The digital landscape is undergoing a profound transformation, driven by the rapid advancements in Artificial Intelligence, particularly Large Language Models (LLMs).

These sophisticated AI systems, such as Google’s Gemini, OpenAI’s ChatGPT, and Anthropic’s Claude, are fundamentally changing how users interact with information and how search engines deliver answers.

For content creators, marketers, and businesses, simply optimizing for traditional keyword rankings is no longer sufficient. The imperative now is to optimize content for LLMs, adopting a “people-first” approach that ensures clarity, trustworthiness, and deep relevance for both human readers and AI systems.

This in-depth guide will explore the strategies for optimizing your content for LLMs, incorporating the latest insights and supporting statistics to help you attract organic traffic and secure top SERP positions in the AI-driven future.

The Evolving Search Landscape: Why LLMs Demand a New Approach

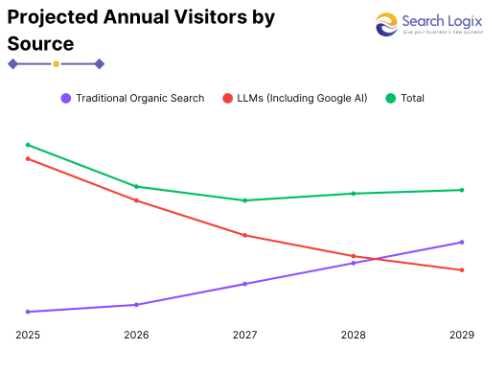

The rise of LLMs marks a significant shift from simple keyword matching to semantic understanding and contextual relevance.

Unlike traditional search algorithms that might prioritize pages based on keyword density and backlinks, LLMs delve into the meaning of content, the relationships between entities, and the underlying user intent behind a query.

Key Statistics Highlighting the LLM Revolution:

- The LLM market is projected to reach a staggering $82.1 billion by 2033, indicating massive growth and integration across industries.

- As of 2025, an impressive 67% of organizations worldwide have already adopted LLMs to support their operations with generative AI.

- 88% of professionals report that using LLMs has improved the quality of their work, emphasizing their transformative impact.

- LLM-powered applications are projected to grow to 750 million globally by 2025, signaling an explosion in AI-driven interfaces where your content needs to be discoverable.

These figures underscore the urgency for content creators to adapt. Your content must be structured and written in a way that LLMs can easily parse, comprehend, and cite in their generated responses, whether it’s an AI Overview in search results or a direct answer in a chatbot.

Understanding How LLMs Consume Content

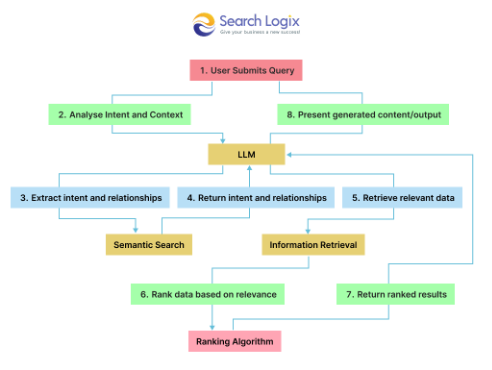

LLMs don’t “read” content in the same way humans do. They process text into “tokens” and use complex algorithms to identify patterns, relationships, and extract factual information. They typically rely on two primary sources:

- Training Data: Vast datasets of text and code were initially trained on.

- Retrieval-Augmented Generation (RAG): For real-time information, many LLMs, especially those integrated with search, use RAG systems to fetch relevant, up-to-date information from the live internet. This is where your website’s content comes into play.

For RAG systems, the quality, structure, and authority of your content directly influence whether it gets selected and cited.

Pillars of LLM-Optimized Content: A People-First Strategy

Optimizing for LLMs is fundamentally about creating high-quality, helpful content for humans. The characteristics that make content excellent for people also make it highly parsable and valuable for AI.

1. Structure for Maximum Clarity and Digestibility

LLMs thrive on structured, organized information. A clear content hierarchy allows AI to quickly identify main topics, subtopics, and direct answers.

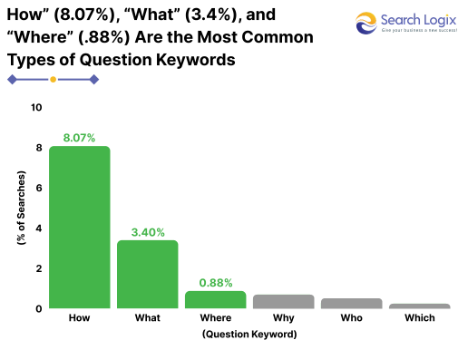

- Logical Headings (H1, H2, H3, etc.): Use descriptive headings that outline your content’s flow. Your H1 should clearly state the main topic, while H2s and H3s should break down subtopics. Consider phrasing headings as questions (e.g., “What is LLM Optimization?” or “How Do LLMs Work?”). This aligns perfectly with how users interact with AI.

- Concise Paragraphs and Sentences: Avoid dense blocks of text. Break down complex ideas into short, focused paragraphs, ideally one idea per paragraph. Shorter sentences (e.g., aiming for an average sentence length under 20 words) enhance readability for humans and improve AI parsing.

- Bullet Points and Numbered Lists: These are gold for LLMs. They allow AI to quickly extract key facts, steps, or features. Using lists for definitions, advantages, disadvantages, or process steps increases the likelihood of your content being used for summaries or direct answers.

- Front-Load Key Information: Start sections and articles with direct answers or summaries. LLMs often prioritize content found at the beginning of a section or page for generating concise responses. A “TL;DR” (Too Long; Didn’t Read) or “Key Takeaways” section at the start can serve as a cheat sheet for AI.

2. Focus on Semantic Depth and Topical Authority

Beyond individual keywords, LLMs understand the semantic relationships between words, concepts, and entities.

- Comprehensive Topic Coverage: Don’t just skim the surface. Cover topics in depth, addressing various facets, related concepts, and common user questions. This builds topical authority, signaling to LLMs that your content is a comprehensive resource.

- Entity Optimization: Consistently refer to people, organizations, products, and concepts (entities) by their proper names. LLMs build “knowledge graphs” based on these entities. The more accurately and consistently you refer to them, the better LLMs can understand and connect your content to relevant searches.

- Semantic Keyword Strategy: Integrate not just exact-match keywords but also synonyms, related terms, and conversational phrases. LLMs understand the context, so natural language is key. For example, if discussing “content marketing,” also include terms like “content strategy,” “digital content,” “audience engagement,” etc.

- Internal Linking for Context: Strategically link to other relevant, authoritative pages on your own site. This creates topic clusters and helps LLMs understand the breadth and depth of your knowledge on a subject.

3. Build Trust and Credibility (E-E-A-T)

Google’s emphasis on Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) is more critical than ever for LLM visibility. LLMs are designed to provide reliable information, and they prioritize sources that demonstrate strong E-E-A-T.

Demonstrate Expertise and Experience:

- Author Bios: Include detailed author bios with credentials, experience, and relevant qualifications.

- First-Hand Experience: Share unique insights, original research, case studies, and personal experiences. Content featuring original statistics and research findings can see 30-40% higher visibility in LLM responses, as LLMs seek concrete data to support claims.

Establish Authoritativeness:

- Cite Reputable Sources: Link to and reference authoritative external sources to back up your claims.

- Digital PR and Mentions: Build strong topic-brand associations by securing mentions on reputable third-party websites, industry directories (like G2 or Capterra), and even Wikipedia (if notable). Consistent mentions across authoritative sources help LLMs associate your brand with specific topics.

- Proprietary Frameworks/Branded Content: Develop and publish unique models, frameworks, or methodologies. LLMs often prioritize and cite original thought leadership. Observe a 20% uptick in brand mentions in AI-generated summaries when embedding brand names and unique terminology.

Cultivate Trustworthiness:

- Accuracy: Ensure all information is factually correct and up-to-date. Inaccurate information can damage your credibility with both users and LLMs.

- Transparency: Clearly state your sources for data and claims.

- Reviews and Testimonials: Public social proof, reviews on platforms, and discussions (e.g., on Reddit) are signals that LLMs consider when evaluating content reliability.

4. Optimize for Multimodal Content and Accessibility

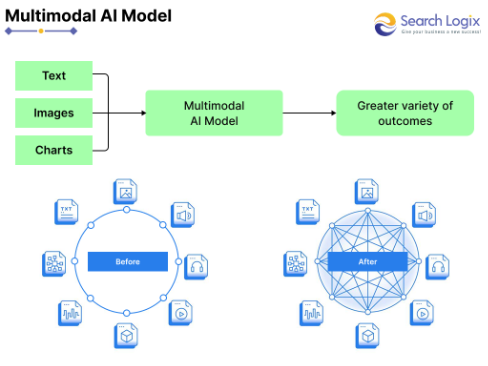

LLMs are becoming increasingly multimodal, capable of understanding and generating content across text, images, and even video.

- High-Quality Images and Videos: Support your textual content with relevant, high-quality visuals. Multimodal content is more engaging for users and provides additional context for AI.

- Descriptive Alt Text and Captions: Always use descriptive alt text for images and provide informative captions. This helps LLMs understand the visual content and its relevance to the surrounding text.

- Video Transcripts: Provide full transcripts for video content. This makes your video content crawlable and indexable by LLMs, allowing them to extract key information.

- Accessibility: Ensure your content is accessible to all users. This includes proper semantic HTML, sufficient color contrast, and clear navigation. Accessible content is also inherently easier for AI to process.

5. Leverage Structured Data (Schema Markup)

While the direct impact of structured data on LLM rankings is debated, it undeniably helps LLMs better understand your content.

- Implement Relevant Schema: Use schema markup (e.g., Article, FAQ Page, How-to, Product, Review, ImageObject, VideoObject) to provide explicit signals to LLMs about the type of content you have and its key elements.

- Match Visible Content: Ensure that any information included in your structured data markup is also clearly visible on the webpage. Inconsistencies can lead to issues.

6. Embrace a Question-and-Answer Format

Given that many LLM interactions are question-based, directly answering common queries is a powerful optimization technique.

- Direct Answers: For every common question related to your topic, provide a concise, direct answer that can stand alone. Follow it with more elaborate explanations.

- Dedicated FAQ Sections: Create a comprehensive FAQ section at the end of relevant articles. These sections are prime candidates for LLMs to pull information for direct answers or AI Overviews.

- Anticipate Follow-Up Questions: Think about the logical next questions a user might have after receiving an initial answer and address those within your content.

7. Monitor, Analyze, and Iterate

The LLM landscape is dynamic. Continuous monitoring and adaptation are crucial for sustained success.

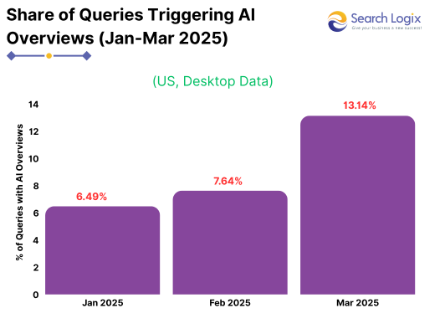

- Track AI-Driven Traffic: Monitor your analytics to understand how users are arriving from AI-powered search interfaces. While direct clicks might shift, the quality of traffic from AI Overviews can be higher, with users potentially spending more time on your site.

- Identify LLM Citations: Use tools (or manual checks) to see when your content or brand is cited in AI-generated responses. This provides direct feedback on your optimization efforts.

- Update Content Regularly: LLMs favor fresh, relevant content. Regularly review and update your articles with the latest information, statistics, and trends. For time-sensitive topics, update timestamps.

- Test with LLMs: Use various LLMs (e.g., ChatGPT, Gemini, Claude, Perplexity) to query information related to your content. See how they interpret your page, what answers they provide, and if they cite your site. This gives you direct insight into AI’s understanding.

Best Practices to Structure Content for LLMs

When optimizing content for Large Language Models (LLMs), structure is just as important as substance. While traditional SEO focuses on search engines like Google, optimizing for LLMs—such as ChatGPT, Gemini, or Claude—requires a more human-first, context-rich approach.

Structured content makes it easier for LLMs to interpret, summarize, and quote your material in natural language responses.

Let’s dive into the most effective formatting strategies to make your content LLM-ready in 2025 and beyond.

1. Use Clear Headings & Subheadings

Clear and hierarchical headings not only enhance readability for humans but also help LLMs parse and index your content more effectively.

- Use H2 tags for major themes and topics. Think of these as chapter titles in your content.

- Use H3 (or H4 where needed) for subtopics, steps, or breakdowns under each H2.

This structure mimics the outline formats LLMs are trained on and enables better contextual mapping between content blocks.

Framing headings as questions helps align with natural user queries. This increases the chance your content is surfaced in AI-generated answers.

Why it works:

- LLMs are often prompted by user questions.

- When your headings echo common phrasing like “How does…”, “Why is…”, or “What is…”, it improves retrievability and relevance.

Put your primary or secondary keyword (e.g., “optimize content for LLMs”) within the first few words of your heading. This:

- Improves semantic relevance

- Enhances topical clarity for both LLMs and traditional search engines

- Boosts visibility in AI summarization tools

Pro Tip: Use keyword variations in different subheadings to signal breadth of coverage.

2. Answer Questions Directly

LLMs often extract short, direct answers from text when formulating responses. If your content provides a succinct answer upfront, it becomes highly quotable by AI models.

How to do it:

- Start sections with a clear, 1–2 sentence summary of the answer.

- Follow with detailed explanations, examples, or case studies.

This layered format caters to both AI citation and reader depth.

3. Use Bullet Lists & Tables for Easy Parsing

LLMs thrive on structured data. Bullet points, numbered lists, and tables offer a clean, easy-to-digest format that models can understand with minimal ambiguity.

Why this helps:

- Prevents information overload

- Breaks complex ideas into bite-sized chunks

- Encourages better memory encoding in generative AI models

Use cases:

- List pros and cons

- Step-by-step guides

- Compare tools, platforms, or techniques in a table

4. Include FAQs That Target Search and AI Queries

FAQs are a goldmine for LLM optimization.

- LLMs often pull answers from FAQ-style blocks because they offer direct, standalone explanations.

- They align perfectly with zero-click answers or snippet generation.

How to make it effective:

- Include 5–10 FAQs at the end of each long-form article.

- Use real questions your audience is asking (look at forums, Google’s “People Also Ask,” or AnswerThePublic).

- Keep answers short but rich in value (50–150 words).

- Embed relevant keywords naturally in both the question and the answer.

Pro Tip: Use schema.org markup (FAQ Page) to help search engines and LLMs identify these blocks programmatically.

5. Optimize Readability for Humans & Machines

While technical accuracy matters, content must also be effortless to read. Readability is crucial for:

- Retaining human visitors

- Assisting LLMs in extracting accurate, unambiguous interpretations

Best readability practices:

- Use short sentences (12–18 words on average)

- Stick to common vocabulary; avoid excessive jargon

- Use active voice more than passive

- Break long paragraphs into 2–3 sentence chunks

- Add visuals, infographics, or screenshots where helpful

Flesch Reading Ease:

Aim for a score above 60 (equivalent to 8th-grade reading level). Tools like Yoast SEO, Hemingway Editor, or Grammarly can help assess and improve this metric.

People-First Content is AI-First Content

Optimizing content for Large Language Models isn’t about gaming an algorithm; it’s about crafting exceptionally high-quality, trustworthy, and user-friendly content.

By focusing on clear structure, semantic depth, strong E-E-A-T, multimodal engagement, and a direct answer approach, you naturally create content that serves human readers profoundly well.

As we move further into 2025 and beyond, the content that earns top SERP positions and attracts valuable organic traffic will definitely satisfy user intent, provide comprehensive value, and be easily digestible by the intelligent AI systems.

Embrace this people-first approach, and your content will be well-positioned to thrive in the AI-powered search future.

How eSearch Logix Can Help in Content Optimization for LLMs?

As large language models (LLMs) like ChatGPT, Claude, and Google Gemini increasingly influence how users access information, traditional SEO alone is no longer enough.

At eSearch Logix, we offer AI SEO services that include customized content strategies, aligned with how LLMs understand, process, and surface information.

Here’s how we help you stay ahead:

- LLM-Centric Content Structuring

- Fact-Rich, High-Quality Content Creation

- Answer Engine Optimization (AEO)

- Topical Authority Development

- Entity-Based Optimization

- Performance Analytics & AI Adaptation

Whether you’re building an enterprise knowledge hub or improving your blog’s visibility in AI-powered answers, eSearch Logix provides the strategy, execution, and optimization expertise you need to stay ahead in the LLM-driven content era.