Learning Python gives you an edge over the competition and allows you to take your website’s SEO to newer heights. Using Python, you can get access to information and insights that traditional SEO tools do not provide, and that too at a speed that’s aligned with fast-paced corporate life.

With Python, you can automate your projects which would present you with the opportunity to work on different projects and give more attention to other areas of your business. So, if you’re someone who’s looking to work faster and get results outside of the boundaries of conventional SEO then learning Python will be a great help.

Table of Contents

ToggleWhy Use Python for SEO?

It goes without saying that learning programming and data science makes you more productive, but you have to put in the work to make it happen. Here are a few reasons why learning Python can be a smart move and benefit your website’s SEO-

1. Multiple sources of data

No tool can provide you with a complete view of your company. Data from several sources are often included in any thorough data analysis. In order to have a more complete picture of your performance and to make informed decisions regarding the company, you will always need to aggregate data from many sources. You will have to “speak” to the tools yourself because they don’t often “talk” to one another.

Think about asking the straightforward issue of determining whether the bounce rate of your discounted items differs from those that are not. If the URL or page title doesn’t mention the discount, Google Analytics won’t be of any use to you.

You’ll need to either manually crawl the pages or employ a crawler to do it for you. You must instruct the crawler to extract the page components containing the discount details.

You now possess two tables:

- The crawler table, which also includes information about discounts and URLs.

- The analytics table that displays URLs and bounce rates

Now that you have a new, larger table, your task will be made easier when you integrate the two, making sure that the URLs in the first table properly line up with the URLs in the second.

2. New Potentials

Using the aforementioned example, you could even ask a more specific question like, “How big does the discount have to be for the bounce rate to drop by X%?” now that you have those data. You also have H1, H2, H3, meta descriptions, load times, and more while you\’re at it. Why not see whether any of those are correlated with the conversion rate, time on page, bounce rate, etc.?

3. Automation

You will need to write some code, which is a collection of instructions for the computer to follow, in order to put the aforementioned example into practice. Once it is written and you have verified that it is functioning properly, you can run it once more the following month by simply pressing “run.” Alternatively, until you have a developed and reliable procedure for that specific situation, you might build on that analysis each month.

Handling massive amounts of data, repeatedly performing time-consuming tasks, combining tables, and importing data from many sources are all highly complex and, in many cases, impossible to do manually.

This allows you to spend more time on strategic issues such as analysis, insights, and strategy rather than worrying about the minute details of merging two tables or crawling a few thousand pages. All this (handling multiple data sources, automation, productivity, and doing things you simply can’t do manually) means that you can have more time to focus on these issues.

Web Scraping Using Python

Web scraping is an automated method of gathering data from the internet. It is a process of creating an agent that can automatically collect, parse, download, and arrange meaningful information from the web. It is also referred to as web data mining or web harvesting.

Installing Python on Linux:

Step 1: Open the terminal on your system and write the following command-

sudo apt-get install python3

If you wish to install a specific version of Python, then mention the version at the end of the command, such as-

sudo apt-get install python3.X

(Here the X denotes the specific version of Python like Python 3.6, Python 3.8)

Using this command would install python on your system.

You can check which version of Python is installed on your system using the command-

python -v

Step 2: Once Python is installed you can go to your terminal and write Python and it would start the interactive interpreter on your terminal.

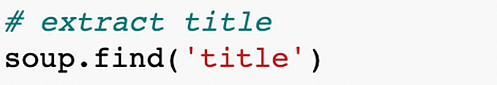

Using Python Requests and Beautiful Soup to Extract SEO Information from a Website

In this section, we’re going to explore how web scraping can be used to extract SEO information from a website. We’ll apply Python modules such as Requests and Beautiful Soup to extract information such as Links, Titles, Meta Tags, Word Count, and more.

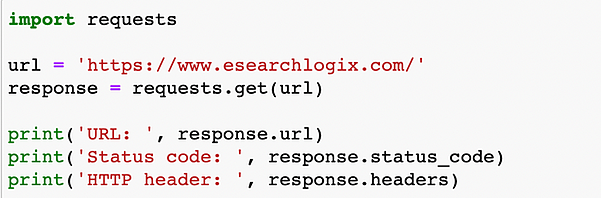

How to Use Python Requests

Step 1: Install the Python Requests package

$ pip install requests

Step 2: Import the Requests module

import requests

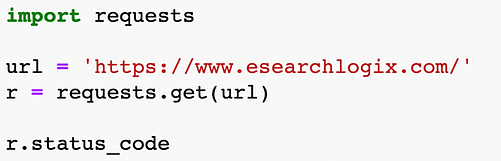

Step 3: Using the GET method make a REQUEST

Store the response in a variable and use the GET method

r=requests. get (URL)

Step 4: Use requests attributes and methods to read the response

You can interact with the Python request object using its method i.e., r.json() and its attributes i.e., r.status_code

Python Requests Install

Use pip to install the latest version of Python Requests.

$ pip install requests

To move forward with our examples, you’ll also need to install the following packages-

$ pip install beautifulsoup4

$ pip install utllib

Import the Request Module

You need to use the import keyword for this-

import requests

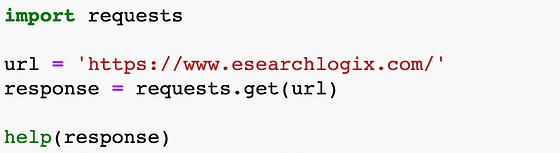

Get Requests

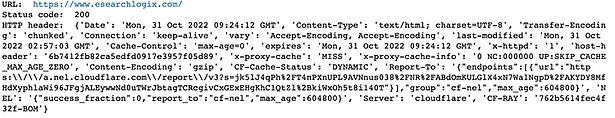

Output:

Response Methods and Attributes

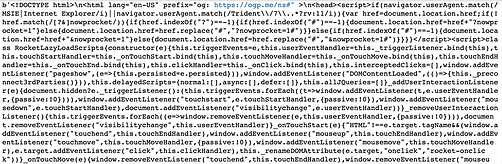

The server’s response to the HTTP request is contained in the response object.

You can use help() to investigate the details of the Response object.

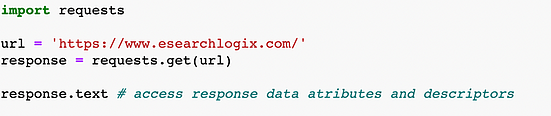

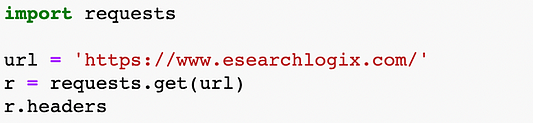

Access the Response Methods and Attributes

You can access the methods and attributes of the object which is a response to the requests.

The attributes can be accessed using object.attribute notation and the methods can be accessed using the object.method() notation.

Output:

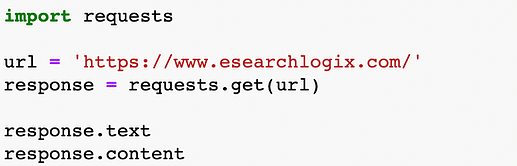

Process the Response

Access the Python Requests JSON

In Python Request, you need to use the response.json() method to access the JSON object of the response. And in case the response of the request is not written in JSON format, then the JSON decoder will return the exception as requets.exceptions.JSONDecodeERROR

Show Status Code

Output:

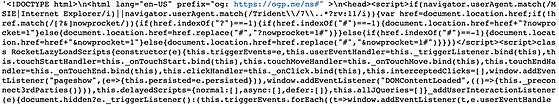

Get the HTML of the Page

Output:

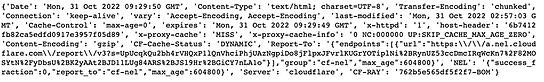

Show HTTP Header

Output:

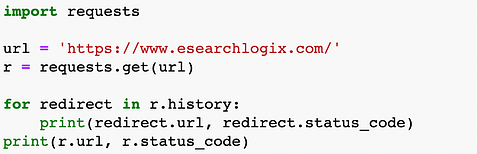

Show Redirections

Output:

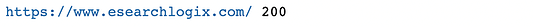

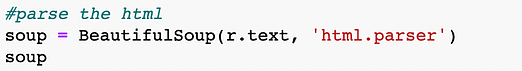

Parse the HTML with Request and BeautifulSoup

Parsing with BeautifulSoup

Output:

Output:

Getting main SEO tags from a webpage

Output:

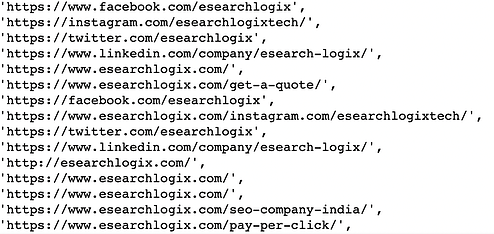

Extracting All the Links on a Page

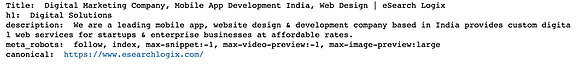

-Extracting Title

Output:

Extracting Meta

Output:

Extracting Meta with Specific Attribute

Output:

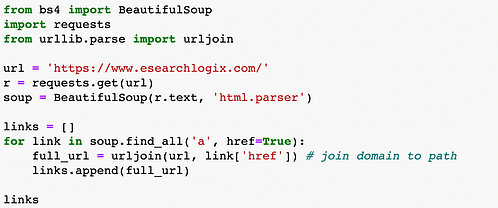

Extracting All the Links on a Page

Output:

Improve the Request

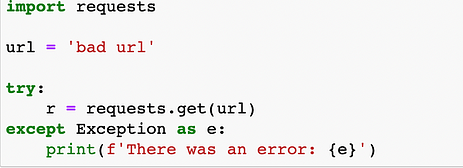

Handle Errors

Output:

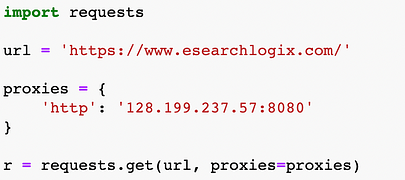

Use Proxies

What is Python Requests Library?

It is a basic web scraping package for Python. It is a powerful HTTP library that is used to access websites. The raw HTML of websites can be obtained with the aid of requests, which can then be parsed to retrieve the data.

What is BeautifulSoup?

It is a Python package that can extract data from XML and HTML files. It provides natural means of traversing, searching, and altering the parse tree in conjunction with your preferred parser.

Conclusion

Python has presented the SEO world with new opportunities. You can use Python to make a great many changes to your website to help it get better search engine result page (SERP) rankings, extract information about the website, automate your site, and more.